Program notes for the artistic documentation

Program notes for the artistic documentation

On documentation of artistic works and on documentation of reflection

About the playback of sound files

Hardware, physical controllers

General notes on the music recordings

Hvor i Rømmegrøten er Melkeveien?

Feedback instrument performances

“Irrganger” installation (Labyrinths)

The Concert, December 1st 2007

On documentation of artistic works and on documentation of reflection

The artistic works and the etudes are submitted for two reasons. Obviously, the artistic works are submitted for their artistic value, but in the context of the project they also represent documentation of reflection during the process. The works and the etudes show aspects of what I have been working on, and how I have tried to solve musical problems. They also show what kind of approach to improvisation I have taken during different parts of the process, and it is expected that the final concert will contain many elements whose origin can be traced back to the etudes. This document is presented not as reflection, but as program notes to the artistic documentation. Even so, it also forms part of the reflection, as the musical examples will provide a reflection of the thought processes involved in the project work. Parts of the artistic result are presented here, but it should be noted that the artistic result should also be read as reflection in the course of a process.

The final concert in Trondheim December 1st 2007 is a substantial part of the artistic documentation, in addition to the music examples and the instrument presented in this document.

About the playback of sound files

The artistic documentation contains sound files for each project documented. The written description will sometimes contain reference to a specific position in time within the sound files. When playing back the sound files by clicking on the link in this (HTML) document, they will be played back. However, the type of audio playback application used for the playback will vary according to the reader’s browser setup. Some playback applications will not show time elapsed while playing the audio file. If this is the case, the reader is encouraged to open the audio file in a playback application with time display. If reading this document online, use save as… to save the file to disk. The document is also distributed on DVD, in which case the audio files can be found in the “wav” folder on the DVD. The audio files folder (“wav”) contains subfolders for each project, named accordingly.

The instrument: ImproSculpt

ImproSculpt is a musical instrument with some compositional techniques implemented as an integral part of the instrument. ImproSculpt is both event-based (note for note, like midi) and processing-based (sample manipulation). It utilizes live sampling as a significant timbral source.

The instrument has gone through several stages of development during the course of the project. Development of physical controllers (hardware) for the instrument has gone hand in hand with the development of the software part of the instrument. For the hardware, the development work largely consists of choosing among available commercial hardware, putting together the parts to form a physical control system for the instrument. Simple programming of the hardware is considered part of the physical controller development. The software part of the instrument was developed and implemented bit by bit from the ground up using the Csound and Python languages. As the instrument (hardware and software) has been developed over the whole time span of the project, it is not feasible to describe every intermediary state of the instrument. The instrument is described here as is at the time of writing (fall 2007). Where appropriate, remarks are made in connection with the music examples to explain the state of the instrument at the time of the recording.

The instrument as an artistic product

The instrument developed during the course of this project is considered part of the artistic documentation, and the instrument is considered by the author as an artistic work in itself. This is especially true for the software part of the instrument. The hardware part of the instrument represents a custom setup for ImproSculpt as I use it when I perform, and this hardware setup can be viewed as a special case of using the software (not necessarily possible to duplicate by other performers using the software). Obviously, the notion of viewing an instrument such as ImproSculpt as an artistic work is debatable. One might argue that the instrument contains artistic potential, but that this potential needs to be realized before it can be called an artistic work. The following is an argument for the case of considering the instrument as a work of art. A composed piece of music as represented by a musical score is commonly regarded a work of art, even if it is never performed. The performance of the piece is commonly considered a separate artistic action (a separate artistic work). It follows that the artistic potential represented by the composed piece is commonly regarded an artistic work. The musical score is in itself not the work, but it represents a notation of the idea of the work. The work itself is abstract until it is performed. In a similar manner, the software code of the ImproSculpt instrument can be viewed as artistic potential, abstract until the moment of an actual performance with the instrument.

Hardware, physical controllers

The physical controller for the instrument consists of a Marimba Lumina, a midi controller pedal board, a group of additional foot controllers, and a Lemur touch interface. The Marimba Lumina was designed by Don Buchla in the late 1990’s, only about 30 instruments were produced. The pedal board is an off-the-shelf (albeit discontinued) Roland FC200. The Lemur touch interface is made by JazzMutant and is still commercially available.

Marimba Lumina

The Marimba Lumina is a percussion midi controller with 4 mallets. It can sense which of the 4 mallets is used in playing, and so each mallet effectively can be used as a separate and independent midi controller. As a simple example, each mallet may play on a separate midi channel. The Lumina can also sense the position of a mallet on a tone bar, both at the time of attack and in continuous motion after the attack of a note. This allows the use of mallet glides on a tone bar to send continuous controller data. Continuous controller data can also be sent by using a control strip, which is essentially a larger horizontal strip acting like a larger version of a tone bar. The Lumina also has an extra “octave” of small pads that can be used e.g. to send midi notes or other messages. Finally, the Lumina has 5 freely assignable pedal inputs.

For my use with ImproSculpt, the Marimba Lumina is set up to so that each mallet plays on a separate midi channel. This allows the Lumina to limit polyphony for each mallet separately. This is a unique feature to the Marimba Lumina and it is freely programmable. Some effort has been used to find intuitive use for this feature. The current setup is: mallet 1 plays on midi channel 1 and it is monophonic, mallet 4 plays on midi channel 4 and it is monophonic, while mallets 2&3 plays on midi channel 2 and they are polyphonic. This means that the total number of simultaneous notes played is not limited, but a tight voice leading control is possible with mallets 1 and 4. On a traditional vibraphone, the performer has to take care to dampen notes when playing polyphonic legato melodies. With this setup on the Marimba Lumina, damping is not necessary and the performer can focus more on the shaping of the music than on the playing technique including damping. By the use of a foot switch connected to the Lumina, the configuration for mallets 2&3 is changed so that they both play on midi channel 6 in monophonic mode. This means that the two mallets are seen as a monophonic entity together, this facilitates fast melodic runs without caring for mallet damping. Another foot switch is configured to transpose mallet 1 down one octave and mallet 4 up one octave, this effectively enhances the pitch range of the instrument. As the Lumina has a limited range of 2.5 octaves, I consider this expansion of the pitch range very useful. Another pedal controller input of the Lumina is used as a standard sustain pedal, in the same manner as any midi keyboard, and finally, an expression pedal controller is used to send continuous controller data (routed to a master volume control in Csound).

The “pads” on the Marimba Lumina is used for control purposes (not to play audible midi notes). The entire data mapping from the pads is done in Csound, but it seems natural to describe the mapping in this paragraph. Two of the pads are set up to control the attack time on instruments controlled by the Lumina, one pad increases the attack time while another decreases it. Another set of two pads increase or decrease the amount of modulation effects applied to instrument timbres. Yet another set of pads control the on/off state of independent voices in the intervalMelody generator in the ImproSculpt software. Pads are also used to start or stop recording of midi pitches for the algorithmic melody generators, as well as start/stop of the master clock in ImproSculpt software. Finally, one pad is used as a tap tempo controller, enabling tempo changes for the ImproSculpt master clock, this also sets the delay time for delay audio processing effects as appropriate to follow the master clock tempo.

The pedal board

A pedal board is used for patch change, e.g. changing the instrument timbre for the Csound synthesizer to which the Marimba Lumina is connected. A total of 6 expression pedals is connected to the pedal board, these are used to control a selection of audio processing effects: delay, ring modulation, rotary speaker, distortion, reverb, and phaser. The pedal board is programmed simply to transmit midi continuous controller data for each pedal. The Csound synthesizer doing the audio processing does map the controller data in such a way that when an expression pedal is in the “minimum” position, the effect is completely bypassed by means of crossfading the effect output with an unprocessed version of the signal. The controller data for each pedal is further used to specify modulation parameters for each of the audio effects. A single pedal controller is also set up to trigger audio live sampling in the ImproSculpt software.

The Lemur

This is a touch screen. Whereas a traditional touch screen can only trace movement of one finger at a time, the Lemur is capable of tracking several fingers simultaneously. The Lemur can be programmed via a simple graphic drag and drop interface provided from the manufacturer. Several different control objects can be placed on the screen in a user defined setup (faders, multisliders, knobs, buttons, xy controllers), and the control objects can send any midi or OSC (Open Sound Control) message. In the ImproSculpt setup, the Lemur is used to send midi continuous controller data to Csound and all mapping of the data is done within Csound or python as appropriate.

The software, ImproSculpt

The ImproSculpt software is built with the Python and Csound programming languages. The Python code contains the main application, with event handling, timed automation, graphical user interface, and composition algorithms. Csound handles audio input/output, midi input/output, and most importantly; audio synthesis and processing.

The source code for the software is publicly available under a license (GPL) that allows users to modify the code and use it for any purpose except selling it for money. The source code of the Python and Csound languages is also publicly available under similar licenses. Documentation of the Python and Csound source code is provided for programmers wishing to build upon the software or use parts of the code in other software. Separate user documentation is also provided, documenting the use of ImproSculpt controlled by GUI or MIDI.

The artistic potential contained in the software is represented by the composition algorithms, and in the application framework making it possible to add new compositional modules. The composition algorithms will in some cases span both Python and Csound code, as the audio synthesis methods used are closely interrelated with the control logic. An example of this is the cloudPlayer module, based on a special granular synthesis generator (in Csound), controlled by a timed automation of synthesis parameters (in Python). The melody generator named intervalMelody is an example of a serial compositional technique utilized with realtime recording and modification of musical source material (e.g. the interval series). The main compositional algorithm for the intervalMelody module is contained in Python code, while the instrumentation is done in Csound.

The intervalMelody composition module also represents an example of a general type of algorithm loosely based on fuzzy logic, and this type of algorithm can be used for other types of musical decision-making in other composition modules. Currently, it is used by the intervalMelody and the intervalVector harmonizer modules. The algorithm is a crossover between database based and rule based systems for algorithmic composition in that it first creates a pool (the database) of potential solutions, and then uses rules to give a fitness score to each of the solutions. We could loosely term this type of algorithm a “pool-rule” type. A problem with database based systems is that they require a large database for the algorithm (e.g. Markov chains) to work well. The pool-rule type of algorithm can give satisfactory results with a fairly small pool of potential solutions. When using the algorithms for live playing, and if we don’t want to start with a preloaded database, it is important that the system might work well with a small pool of solutions.

The following is a brief explanation of the most important compositional modules of the ImproSculpt software:

intervalMelody

This module generates melodies based on an interval series, with a polyphonic voice control for up to 5 voices. The interval series can be recorded in realtime via midi and the generated melodies are played back on melodic instruments in Csound. A rule system has been devised to select pitches for the generated melodies based on the interval series (next note = previous note + interval). The interval series is not treated as a strict serial system, but as a flexible pool of source material. Indeed, if the module generates only one voice of melody, and this voice does not conflict with a pitch register boundary, the interval series will be used strictly and without permutation. Each voice will take the harmonic relation to other simultaneously playing voices into account when selecting its next pitch, and the preferred harmonic relations are controlled by separate rules. The intervalMelody module also has an “absolute pitch” mode in addition to the interval mode described above, in absolute pitch mode, a series of absolute pitches are used instead of a series of intervals. The rhythm for the generated melodies is based on precomposed rhythm patterns.

intervalVector harmonizer

The vector harmonizer is used to harmonize a melody note. Based on an interval vector[1], it generates a number of alternatives for the chord to be used for harmonizing. A set of weighted rules are used to select among these chord suggestions. The rules consider voice leading parameters as the total distance from chord to chord, parallel motion, common notes, voicing spread, chord repetitions etc.

The duration of the chord will be equal to the duration of the melody (midi input) note and the melody note is considered part of the interval vector chord.

Interval vectors may be recorded by analyzing the notes via midi input.

randPlayer

Playback of audio samples, using live sampled segments or sound files as source. The rhythmic patters triggering playback of segments are based on precomposed patterns. The choice of audio segment for each rhythm trigger is based on simple (controlled random) algorithms selecting from a set of segments assigned to the module. Segment assignment may be automated by subscribing to ImproSculpt’s liveSampledSegments organizer module, as an example we could assign the 4 shortest segments currently in live sample memory at any given time. Filtering, stereo pan, end effect sends can be set with user controlled random deviation on a per event basis. This module is a new implementation of a similar module that can be found in “ImproSculpt Classic” [2] (the ImproSculpt software as implemented prior to the research project). A description of how this module was inspired by the work of John Cage can be found elsewhere in this document.

tidalWaterSound

A composition and synthesis module written specifically for the Flyndre installation, it is not actively used in the current version of ImproSculpt but the activation button (“tws”) still remains in the ImproSculpt main GUI panel and the synthesis instruments still remain in Csound code. The GUI controls for the module parameters have been removed from current ImproSculpt code.

The module was written to illustrate tidal water movement, and the synthesis parameters were controlled by input parameters to the Flyndre installation. The generated audio consists of two basic elements: a filtered noise Risset glissando, and small audio particles (“beads”). The Risset glissando was used to illustrate inwards (rising glissando) or outwards (falling glissando) movement of the tidal water. The audio particles were used to illustrate particles moving about in the water stream. Examples of module parameters are gliss speed, gliss direction, and filter q for the gliding filters, bead size, bead density, bead brightness and bead pitch spread.

The partikkel opcode

The partikkel opcode for Csound was developed during the project as a very versatile granular synthesizer, allowing all forms of time-based granular synthesis (as described in Curtis Roads’ “Microsound”)[3]. The incentive for creating partikkel was to be able to seamlessly morph between different types of granular synthesis. The opcode specifications were written by me, the C implementation was done by NTNU students Thom Johansen and Torgeir Strand Henriksen. For details regarding partikkel, refer to the Csound Manual[4]. Manual page content was authored by me and the Csound manual page formatting was done by Andres Cabrera.

partikkel (single voice, midi control)

The single voice partikkel module in ImproSculpt utilizes the partikkel opcode for playback and granulation of live sampled segments or sound files. Partikkel takes over 40 input parameters, and a number of extra (outside of partikkel) parameters is used to control such features as output routing, effects on separate outputs, external frequency modulation oscillator etc. A selection of these parameters has been mapped for direct midi control, enabling the use of this module for realtime performance with live sampled audio segments.

partikkelCloud

This module generates 4-voice granular cloud automation, sending the automated parameters values to 4 partikkel instruments in Csound. As each cloud contains some 300 parameters, a selection of metaparameters have been created to modify the automation during realtime performance. A separate application; “Partikkel Cloud Designer” allows a detailed and precise specification of parameter values, storing the values to preset files. Each preset contains start, middle and end values for each parameter, as well as segment duration and segment curvature for the transition from e.g. start to middle value. One such preset file is thought of as “a cloud”. In ImproSculpt, the cloud preset files are read into memory, and they can be sequenced. Two special presets (“interpolate” and “static”) can be used to create transitions from cloud to cloud as well as static sections.

feedbackInstrument

This instrument utilizes audio feedback to create continuously changing timbres. A slow feedback eliminator was implemented in Csound, this enables a feedback signal to establish (using microphone input and audio out). The feedback will typically consist of resonant frequencies in the feedback loop, these frequencies are tracked by the feedback eliminator and gradually attenuated. When the strongest frequencies have been attenuated, other resonant frequencies will introduce themselves in the feedback loop. In this way, the feedback instrument explores and utilizes the resonant frequencies in the feedback loop one by one. External resonators can be used to modify the feedback loop, and in that case, a physical manipulation of the resonators affects the resulting prominent frequencies in the audio output. The feedback instrument has an additional internal resonator circuit, an internal feedback circuit as well as delay lines and filtering to enable an additional level of control of the timbre generated.

For a full view of the compositional and audio processing potential of ImproSculpt, please refer to the source code and user documentation.

ImproSculpt program code and documentation

The program code for ImproSculpt is available at Sourceforge, and a zip file is also submitted here. Documentation can be found in the /docs directory of the program code. The user guide for ImproSculpt4 can also be read here.

ImproSculpt4 Program Code (zip)

ImproSculpt4 User Guide (html)

Partikkel Cloud Designer

Even though Partikkel Cloud Designer is used to compose partikkel cloud parameter automation for playback within ImproSculpt4, it is contained in a separate application. This was done mainly because designing the details of partikkel cloud is not a task suited for live performance, because of the large number of parameter settings involved. However, the timbral result of the playback of stored parameter automation can vary greatly due to metaparameter variations, and due to the use of live sampled segments as source waveforms for the partikkel generators. In this respect, the partikkel cloud designer can be viewed as a composition tool, while the cloudPlayer module in ImproSculpt can be regarded a performance tool. For the case of completeness, the partikkel cloud designer application is provided here.

Partikkel Cloud Designer Program Code (zip)

Partikkel Cloud Designer User Guide (html)

General notes on the music recordings

The act of performing music is a natural and crucial part of a research project like this one, as part of the project is focused on developing improvisational skills. The improvisational skills should be developed and utilized on a new instrument (ImproSculpt). A central question in the early phases of the work was “how to perform on a new instrument before the instrument has been built?” Certain parts of the instrument could be tested during development, but the instrument as a whole have been subject to continuous development over the whole course of the project. As a result of this dilemma, some of the activity during the course of the project has been plainly to “keep in shape” as a performer, to stay in close relation to the act of performing music. If this had not been done, the project might easily have turned into a laboratory project where the focus could have been diverted onto smart technical solutions and programming. Keeping up an activity as a performer has worked as a periodic reminder that the result of the work is to be used for performing music. This might seem obvious, and one could argue that no reminder should be needed. On the contrary, my personal opinion is that performing music is an activity containing a lot of silent knowledge, based on a first hand experience, and that this knowledge needs constant updating to be kept precise and correct. Small deviations from a good performing practice resulting in not quite optimal solutions in the instrument design could over time accumulate, resulting in an instrument that was not really usable in a performing context. This would be even clearer when performing with other musicians than when playing solo. I have been aware of the danger that I could be making an instrument designed for solo playing only, based on interplay between musician and machine, disregarding the interplay between live musicians. As the interplay between humans naturally will be richer and more nuanced than any currently possible human-machine interaction, I wanted to keep this possibility open.

I have, however, tried to select performance projects that were as closely related to the instrument development project as possible. I have also used the performance activity as a sort of “hands-on reflection” on the question: “What kind of music is it that I want to be able to play on this instrument, and how should the instrument be built to accommodate this?” I have tried to include a variety of musical genres so as not to limit the design of the instrument to only one type of music.

The music recordings thus serve as a documentation of an artistic process and as a documentation of test cases for small parts of the instrument under development. For a large part of the time I spent working on the project, the algorithmic composition parts of the instrument were not ready for live use. Designing the instrument with a stable modular and expandable structure took the best part of two years, and it is obvious that musical performance with those parts of the instrument could not take place until it was actually made. However, the instrument is made up of other parts than the (algorithmic) compositional automation; instrumentation and direct control being the most notable. Instrumentation is clearly regarded part of a compositional process, and so, designing instrument timbres and mechanisms to get easy access to a large number of timbres is an important part of the work in this project. It could be argued that a conventional synthesizer has a lot of timbres, and a mechanism for patch change to get access to many timbres easily. However, a commercial synthesizer will always have limits inherent in the features the manufacturer has chosen to include. So it is apparent that the timbres would have to be designed and implemented from the ground up to keep maximum flexibility and freedom. Moreover, the freedom for expressive control over the instrument timbre is sometimes limited in commercial synthesizers, or the expressive controls might be present but not easily accessible in the performance situation. Quite a bit of reflection during music performance did go into questions like “what timbral controls do I really need, and how should they be set up with physical controllers so as to be intuitively available in the performance situation?” All of the music recordings feature parts of the ImproSculpt instrument under development, but quite a lot of the recordings only use the instrument as a multitimbral synthesizer with direct midi control. During performance work later in the project, when the compositional automation parts of the instrument had become available, I did start to notice that a lot of musical situations don’t really require such compositional automation. Rather, a direct control of a very flexible expressive instrument is the reasonable musical choice. I say this not to undermine the validity of using compositional automation in the instrument, but to point to the fact that everything does not have to be used at all times, and the task of the performing musician is just as much selecting what should not be played as what should be.

To sum up, the musical recordings represent documentation of the artistic process (as a perspective on the musical influence on the design process), they represent documentation of a “test bed” for separate parts of the instrument, and finally they also represent examples of my evolution as a performer and improviser during the project. The presentation of the different performance projects is done in a roughly chronological order.

Hvor i Rømmegrøten er Melkeveien?

(the title could be loosely translated as ”Lost in porridge, where’s the Milky Way?”)

Writing:

Øyvind Brandtsegg: Composition

Marianne Meløy: Text

Performance:

Sigrid Stang: Violin

Marianne B. Lie: Cello

Else Bø: Piano

Øyvind Brandtsegg: ImproSculpt Classic

Marianne Meløy: Actor

Stian H. Pedersen: Actor

This is a piece written for children, commissioned by Trio Alpaca (Stang/Lie/Bø). No special musical considerations were made to adapt the music to the targeted audience, but Marianne Meløy wrote a story to accompany the music in the hope that the story would help hold the untrained audience’s attention. The story’s main characters are Ragnar Rex, a small planet and Lille Måne (Little Moon), his companion. The two have gotten lost in space, and the story unfolds in search of Ragnar’s home galaxy and his Sun.

The music was written during spring/summer 2004, and the piece was performed in October 2004. In other words, the music composition was done before starting the current research project, and the performance of the piece was done shortly after starting the research project.

The composition techniques used in writing the piece is similar to some of the techniques subject to research. The incentive was to explore the same techniques when “writing by hand” as when writing with algorithms, to try to gain insight into how I would apply musical intuition and taste to modify and refine the output of a strict rule-based composition technique. Serial techniques were used for pitch organization, both in terms of absolute pitch series and interval series.

Another technique was inspired by John Cage’s use of “sound charts”, especially in the piece “16 dances” (1950)[5]. The sound chart is a table of 64 different sounds (single notes, chords, trills etc). Composing with a sound chart, one will move around on the chart, selecting the sounds one by one. When I implemented ImproSculpt Classic (2001-2004), I used this technique as an inspiration for the randPlayer module. The randPlayer module will take live sampled sound segments and play them back triggered by precomposed rhythm patterns. Selection of sound segments are made by limited random operations (e.g. select randomly between the 4 shortest segments currently in memory). When I composed the music for “Rømmegrøten” I used a similar technique to devise the material played by the acoustic instruments, e.g. in the pieces “CsRec” and “CsRecSculpt”.

Performing “Rømmegrøten” during October 2004 was a summing up of what the ImproSculpt Classic instrument was capable of providing, exposing both its limitations and its potential. This provided a starting point for the work in the research project, regarding the design of the new ImproSculpt instrument.

A studio recording of “Rømmegrøten” was made during December 2004. As research, this provided the opportunity to record live to multitrack and being able to edit the material. The editing of an improvised performance was used as a device for artistic reflection, in that some “errors” could be corrected and subsequent overdubs could be made where needed.

CagePentaSculpt_Studio

A substantial part of this piece is made up of serially generated pitch material combined with ImproSculpt’s randPlayer, using live sampled segments as source material. Granular manipulation of live sampled segments is used as an improvised melodic/timbral voice. The drumloops are pre-recorded 2-bar samples, played back with the drumloop module in ImproSculpt Classic.

ClusterChorale2_Studio

This is a serial composition where the pitches generated from an interval series are distributed among the instruments in the trio. The main incentive for using this as part of the artistic documentation is that the sound of this piece was used as an inspiration when designing the intervalMelody module of the new ImproSculpt instrument.

ClusterChorale_Live

The live performance of the ClusterChorale piece contains granular processing of live sampled segments. Part of the “Rømmegrøten” story can be heard in this excerpt. Ragnar barely avoids being swallowed by a black hole.

CsRecSculpt_Studio

This piece relies heavily on the randPlayer module in ImproSculpt, and the music scored for the acoustic instruments is also based on the same Cage-inspired techniques as inspired the design of randPlayer. A modified version of ImproSculpt Classic’s randPlayer module was used to generate chords, transferred to a music notation application via midi. The resulting score was modified and developed “by hand”, by listening and changing elements according to taste. In performance, ImproSculpt were used in a similar was as in the excerpt “CagePentaSculpt_Studio”.

rommegroten_TheWholeShow_Nov2004

This example contains a complete performance of the piece “Hvor i Rømmegrøten er Melkeveien”. It can be considered a reference, to put the other “Rømmegrøten” examples into the context they were written for.

Solo Recording

These are recordings of etudes and experiments in solo playing. The recordings were done during the period July to October 2005. Several different fields have been the subject of experimentation in this context.

· One aspect has been the exploration of the Marimba Lumina as a physical controller for Csound instruments, e.g. using mallet glides as a means of providing expression data, and using the Lumina’s voice leading control features (monophonic mode etc).

· Another subject for experimentation has been the tonality used for improvisation. I wanted to explore a bitonal modality, using pentatonic scales as one of the modes. The bitonal modality is not always present but is used as a means of generating harmonic and melodic tension. Pentatonic modes are used in connection with bitonality for their internal strength, e.g. a pentatonic scale can be juxtaposed on a diatonic scale in another key and still have a very clear melodic and harmonic coherence.

· Yet another aspect of experimentation has been the testing of compositional automation techniques, e.g. parts of the compositionally enabled instrument under construction during the project. For these pieces, the compositional automation techniques were a simple phrase recorder implemented in Csound. This module records midi note numbers as played on the Marimba Lumina (or any connected standard midi keyboard), and used these note numbers as absolute pitches to generate melodic variations. Rhythm patterns for the melodies are based on precomposed rhythmic patterns stored as Csound ftables. This technique is of a serial nature, and melodic variation is limited. During performance, variation is achieved by recording new pitches via midi, with the option of clearing all recorded pitches and starting over with a blank pitch series. A percussion part is generated as well, based on the recorded pitches and the precomposed rhythm patters. The recorded pitch is used to determine the percussion instrument to and the filter or modulation parameters be used for playback of the part. The phrase recorder as used in the SoloTake etudes represent a predecessor to the intervalMelody module currently found in ImproSculpt.

· Finally, the concept of form in improvisation has been investigated in these etudes. A general shape of an overall musical form was conceived (composed, one might say) and then several improvised performances in or around this form were done as etudes.

vibkoralimpro2

One of the first experiments using the Marimba Lumina to control Csound instruments, exploring the voice leading made possible by using monophonic mode for each midi channel controlled by the Lumina.

berg_1

This etude is an exploration of improvisation within a pretty strict 12-tone serial paradigm. It uses a 12-tone row from Alban Berg’s violin concerto, recording the pitches into the phrase recorder as described above and improvising using the tone row as source material. The recording contains a number of audio clicks, artefacts related to poor handling of instrument durations and envelopes in Csound.

trekant5, trekant6 (“triangle5”, “triangle6”)

These two etudes represent exploration of improvisation within a graphically conceived form (the triangle). The triangle is represented as register versus time in a larger aspect of the form, starting at the extreme high and low ends of the register and moving towards a central note. The unfolding of this form is contained in approximately the first one and a half minute of the performances. The triangle is also represented in a pronounced used of the interval L3 (a small third, or 3 semitones). Further, the triangle is represented in small motifs made up of three notes, often starting with the interval L3 and continuing in contrary motion. I did several takes using the same form concept and material as described in this paragraph.

SoloTake6, SoloTake7, SoloTake13

These etudes represent exploration of the phrase recorder with percussion parts. No specific precomposed form or material was used, but all of the etudes are focused on a loosely defined minor scale mode with occasional attempts at bitonality. In general, the pieces start out with long notes exploring beating and timbral variation, moving on to melodic playing and pitch recording, ending in a somewhat similar way as they start.

Some experimentation with tempo changes where done to try to break out of the static nature of the phrase recorder rhythm patterns.

SoloKort3, SoloKort6

These etudes are similar to SoloTakes 6, 7 and 13, but effort was made to try to compress the time spent on completing the musical form.

Hagg Quartet

These sessions were done during November 2005.

Øyvind Brandtsegg: Csound, Marimba Lumina

Eirik Hegdal: Saxophone

Ole Thomas Kolberg: Drums.

Eirik Øyen: Bass, distortion, looping machine

The incentive for doing these sessions in the context of the research project was to explore the expressiveness of the Csound instruments as controlled directly via the Marimba Lumina. Playing within a fairly free improvised setting provided valuable feedback on the musical flexibility of the instrument. I found that the basic setup of the Marimba Lumina and the multitimbral Csound instrument setup were fairly good, and that the technical setup did not hinder expressive performance significantly. On the contrary, the instrument provided a basic palette to be used as a starting ground for further development. I saw the need for a larger collection of instrument timbres and more modulation controllers, as well as the obviously missing compositional automation modules.

Small composed sketches by Hegdal provided a starting point for the improvisations, but the sketches were never played as notated. The examples provided are excerpts from longer improvised pieces.

01_01

The composed sketch can be heard loosely played by Hegdal and me from 7:13, lasting until the end of the piece.

01_01_2

No composed sketch used.

01_02_3_a

The composed sketch can be heard used in the start of the piece, most clearly from 1:29 to 1:59.

01_02_3_b

Using the same composed sketch as for “01_02_3_a”, the only audible trace of the sketch is that I make a reference to the theme during 0:48 to 1:05.

03_03_a

The composed motif can be heard in a fragmented version played by Hegdal starting at 1:37, lasting until 2:10 (minutes:seconds)

03_03_4_bass

No composed sketch used. In parts of the piece, both Øyen and I work in the low register so there might be some confusion as to “who does what”.

04_04_bachish

No composed sketch used.

RRT

These excerpts were taken from a performance in Risør in April 2006, titled “Risør Real Time”.

Øyvind Brandtsegg: ImproSculpt

Ingrid Lode: Vocal and loop machine

Carl Haakon Waadeland: Drums

The incentive for doing this performance in the context of the research project is similar to the incentive for doing the sessions with Hagg Quartet, namely to explore the expressiveness of the Csound instruments as controlled directly via the Marimba Lumina. The RRT project represents freely based improvisation in a slightly different musical style than that played by the Hagg Quartet. In addition, the single voice partikkel module (in an early Csound-only version) was used for granulation of live sampled segments. The structure of the set was arranged by the performers in a common effort during rehearsals together and contained a few arranged musical “meeting points” used as reference or starting points for longer improvised stretches.

RRT_1

Freely improvised, the first piece of the performance.

RRT_3and4

Starting with a folk song (trad.) sung and looped by Lode, evolving into an improvised section.

RRT_7

Starting out with another folk song, “Ingen fågel flyg så høgt” (trad.), evolving into an improvised section. At 5:15 the single voice partikkel module can be heard, using live sampled segments of vocal as source material.

Motorpsycho tour

Øyvind Brandtsegg: Marimba Lumina and ImproSculpt, Vocals

Jacco van Rooj: Drums

Hans Magnus Ryan: Guitar, Vocals

Bent Sæther: Bass, Vocals

This tour consisted of 18 shows during April and May 2006, travelling from Norway to Denmark, Germany, Holland, Belgium, Switzerland and Italy. The incentive for doing this tour in the context of the research project was to explore the use of the instrument in a high energy musical setting. Numerous aspects of performance are different when playing very loud. The listening conditions may sometimes be a bit chaotic, and the instrument timbres must be carefully crafted to cut through and be heard clearly in a massive wall of sound. The choice of notes and phrasing also affects the presence or audibility of a contribution to this musical context. A weakly formulated melodic phrase will simply not make itself heard. It is probably the case that a weakly formulated musical phrase will not be heard as clearly as a well formulated one in any musical context, but in the Motorpsycho environment it will sometimes not be heard at all. This acts as a motivation for me as a performer to create robust musical phrases, and as an immediate feedback on the robustness of a played phrase. If I could not hear what I just played, it might very well be because it was no good in the first place. In addition, Motorpsycho’s attitude to improvisation is quite different from other musical contexts I’ve played in. The improvisational attitude is somewhat similar to that of the group Grateful Dead, where a key feature is that “everybody solo”, e.g. weaving a polyphonic melodic texture by improvised interplay. In a relatively simple framework with a regular beat and a clearly defined mode (most often minor), quite a bit of time is spent on slowly building a musical situation. There is never a rush, and repetition is a deed. It occurs to me that the simplicity of the improvisational framework is set forth so that the performers can spend all available energy on interplay rather than instrumental technique. Fast melodic phrases with a lot of notes oftentimes will not make a significant contribution to the whole, or even be heard as anything else than background noise. Phrasing and timbre is more important than pitch, and variation in these parameters contribute significantly to the musical logic of the style.

The state of the ImproSculpt instrument during the Motorpsycho tour was that everything was coded in Csound, as the Python code framework was not yet ready for use in live performance. The following is a basic outline of the software:

· A multitimbral midi controlled synthesizer with custom program change implementation.

· Audio effect section with realtime control.

· An early version of the partikkel opcode for granular synthesis.

· Live sampling of audio segments for use as source material with the partikkel instrument.

The Marimba Lumina, the pedal board (as described under Hardware), and a 16 channel midi fader box was used to control the instrument.

All compositions by Motorpsycho (Ryan/Sæther). My role in the project was as an improviser and as a performer of composed parts. I did sound design and instrumentation of own instrumental parts. I also had a certain influence on the overall arrangements, in good keeping with the traditional work ethic of rock bands.

Un Chien d’espace – Brussel

This song usually grows into a rather lengthy and open improvisation when performed live, and as such it serves well as a documentation of improvisation in Motorpsycho. Recording of three different performances is provided, so as to illustrate the differences from performance to performance. The opening motif can be heard at 3:00, and the composed part lasts until 8:20. From 8:20 to 26:55 it is improvised, and a return to the composed part is played from 26:55 until end.

Un Chien d’espace – Darmstadt

The composed part starts at 5:20, lasting until 10:20. The improvised part from 10:20 to 29:50 exhibits extensive use of ImproSculpt’s partikkel generator with live sampled input. The composed part returns at 29:50 until end.

Un Chien d’espace – Rimini

The composed part starts at the beginning of the recording, lasting until 5:00.The improvised part lasts from 5:00 to 18:00. The composed part returns at 18:00 until end.

Trigger Man – Rimini

This example shows a relatively straight rock song with an instrumental and improvised midsection. The instrumental part starts at 2:40 and the improvisation lasts from 3:35 until 8:10.

Sancho Says – Halden

This example shows a relatively straight rock song with an instrumental midsection. The midsection (2:15 to 5:10) lends itself to composed instrumental parts, but freely interpreted from performance to performance. The material for the instrumental part was co-composed by me during rehearsals with the band. My chord voicings during the vocal verses (e.g. at 0:30) was orchestrated as to try to help the intonation of the vocals.

Flyndre (Flounder)

The Flyndre installation was built over an

existing sculpture by the sculptor Nils Aas, which carries the same title, and

which is located at Straumen in Inderøy, Norway.

Flyndre makes use of a loudspeaker technique by which the sound is transferred

to the metal of the sculpture. The music is influenced by parameters such as

high tide and low tide, the time of year, light and temperature, and thus

reflects the nature around the sculpture. The sculpture and sound installation

is located near a tidal water stream, and this is the incentive for using tidal

water data and moon phase data as input parameters. The exhibition time is 10

years, from September 2006 to 2016, and the music develops continually during

the span of the exhibition time.

I was the composer, programmer and technical designer of the installation. The producer for the installation was Kulturbyrået Mesén, and the technical producer was Soundscape Studios. Students from NTNU were involved in configuring and testing the network transport of sensor and audio data. More information and a realtime audio stream from the installation can be found at http://www.flyndresang.no

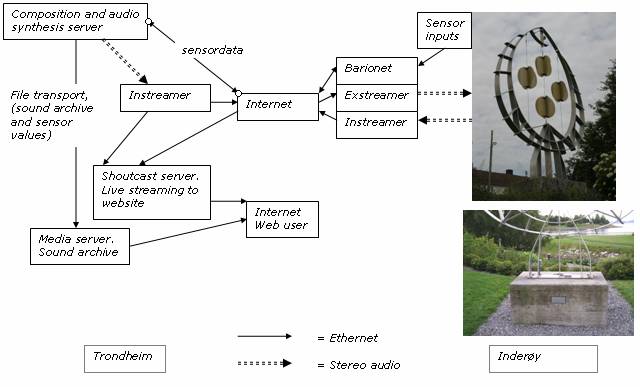

A chart of the signal flow for the Flyndre installation.

Equipment situated in Trondheim on the left side of the illustration, equipment in Inderøy placed on the right side.

Communication between Inderøy and Trondheim via internet.

The full list of input parameters:

· Light intensity

· Light average (average of light intensity during the last 5 minutes)

· Temperature

· Temperature average (average of today’s temperature and yesterday’s temperature at the same time of day).

· Moon phase

· Moon illuminated (percentage of moon illumination, e.g. 100% at full moon)

· Water level (for tidal water)

· Water speed (tidal water moving though the Straumen tidal water stream)

· Water direction (inwards/outwards for the tidal water stream)

· Time of day (hours, minutes)

· Time of year (date, month)

· Weekday

The software used in the Flyndre installation represents the current state of ImproSculpt at the opening date; this fork of development has not been changed significantly since September 2006. The code used in Flyndre can be viewed at http://improsculpt.cvs.sourceforge.net/improsculpt/Flyndre/. The ImproSculpt software has of course been developed further during the time since opening Flyndre, but the changes have not been committed to the Flyndre installation. An important aspect of the idea behind the installation was that all changes in the music generated should stem from variations in the input parameters used, and that the music should develop over the course of the 10 years without intervention from the composer. The compositional modules used are “intervalMelody”, “tidal water sound”, “randPlayer” and “cloudPlayer”. An explanation of these compositional modules can be found in the “The software, ImproSculpt” paragraph of the current document. The selection of which compositional modules will be active at any given time, the desired number of simultaneously active modules, and the control parameters for each module is determined by the input parameters. The code for this mapping can be read at http://improsculpt.cvs.sourceforge.net/improsculpt/Flyndre/flyndra/control/eventCaller.py?revision=1.1&view=markup starting at line 1171 in the method executeInputParameterMappings().

A sound archive is generated by sampling 1 minute fragments of the installation approximately once every day. The full sound archive can be found at http://www.flyndresang.no/lydarkiv/. A number of selections from the sound archive of the installation are to be found in the audio material submitted as artistic documentation for the research project. The selections have file names representing the date and time of recording, e.g. “FlyndraOutput_2006_9_13_23_14.mp3” was recorded on the 13th of September 2006 at 11:14 pm. The input parameter values at the time of the recording are stored in an xml file with the same filename prefix (e.g. “FlyndraOutput_2006_9_13_23_14.xml”), and the xml file is used as data for the sensor values animation for the sound archive found on the web site.

As a guide to the sound archive examples, the following list explains which compositional modules were active in some of the examples.

FlyndraOutput_2006_9_13_23_14

intervalMelody with 2 voices, one played on a synthesized vocal timbre and one on a marimba timbre.

FlyndraOutput_2006_9_14_14_10

tidalWaterSound and randPlayer.

FlyndraOutput_2006_9_20_18_42

randPlayer and cloudPlayer.

FlyndraOutput_2007_2_25_22_6

intervalMelody with 2 voices, cloudPlayer using a recording of the lullaby “Når lykkeliten kom til verden” (sung by my mother Inger Jorid) as source material. The use of recorded song as source material for the cloud player is used quite a bit in Flyndre. The songs are generally folk songs or popular songs with reference to a particular month.

FlyndraOutput_2007_3_30_11_46

randPlayer and cloudPlayer. The cloudPlayer uses a recording of my daughter Ylva singing.

FlyndraOutput_2007_5_31_15_41

Starts with a few seconds of intervalMelody, continues with cloudPlayer using a recording of my daughters Ylva and Tyra singing. The example shows the transition from one set of compositional modules to another.

FlyndraOutput_2007_7_16_0_10

cloudPlayer late night.

Kanon

Øyvind Brandtsegg: ImproSculpt

Thomas T. Dahl: Guitar

Mathias Eick: Trumpet (for the Molde performance)

Arve Henriksen: Trumpet (for the Trondheim performance)

Kenneth Kapstad: Drums

Mattis Kleppen: Bass

Hans Magnus Ryan: Guitar

Bent Sæther: Bass

Kanon was a commissioned concert for Trondheim Jazzfestival 2006. It was performed in Trondheim in August 2006, with a second performance in Molde July 2007. In addition to the original composed sections, it consists of numerous references to pop and rock songs. The title translates as “Canon” and “Cannon”. The main intention of writing the piece was as a platform for improvisation. The guidelines given to the ensemble were generally that one should strive to avoid a “solo queue” where each musician in turn played a solo. Rather, they were encouraged to fight for improvisational space, preferably improvising more than one at a time. In separate sections of the piece, one of the performers might be assigned the role as “soloist”, but this role would be regarded more as a “you start” kind of assignment. The references to pop and rock songs were intended as stylistic cues as to what kind of energy level and timbre was expected from the final result.

The Kanon piece was composed and arranged by me, except where references are noted. One original section (a bass riff leading to an improvised section of 5-7 minutes) was composed by Kleppen and Sæther. Obviously, a piece that relies on a group effort in improvisation will include significant contributions from each member of the group, both in terms of the improvised content and in terms of small changes to arrangements during rehearsal.

The intervalMelody of ImproSculpt was used live for the first time in the performance of Kanon. Other parts of ImproSculpt used were the partikkel module with live sampled input, and obviously the multitimbral synthesizer and effects controlled by the Marimba Lumina. The performance in Molde was one of my earliest uses of the Lemur as a controller.

An effort has been made to try to limit the amount of material submitted, so excerpts from the two performances of Kanon have been made. The excerpts are taken from the first performance, except where noted (when the excerpt name contains “i Molde” it is taken from the second performance). The first performance was recorded on multitrack, with subsequent mixing and mastering. The second performance was recorded directly in stereo from the front-of-house mixing board. Differences in audio quality and perceived loudness are due to the difference in recording techniques.

K-A-N-O-N

Morse code for the letters in KANON was used to devise the rhythmic structure of this piece. For example the Morse code for the letter K is “-.-“ (long-short-long), and the durations derived from the Morse code were used as duration for the chords. The piece uses two different chords, one for “long” and one for “short”.

In other parts of the performance, Morse code for diverse combination of the letters K,A,N,O,N were used to construct cue motifs. The motifs were used as transitions from song to song, or as cues for ensemble activity within longer improvised sections.

The Morse code for the letters: (K = -.-), (A = .-), (N = -.), (O = ---), (N = -.)

IntervalSeries

The interval series [+10, +3, +3, -2] was used as the only source material for collective improvisation. Each player were instructed to use the interval series in any transposition or permutation, and try to adhere to the series as strictly as possible while listening to the whole and trying to make coherent phrases. No limitations on the choice of rhythm were imposed.

Thursday

A rendition of the song Thursday by the group Morphine. In the context of the artistic evaluation, the improvised section lead by me may be of interest (starting at 1:40 and lasting until 3:00). The ending 8 bars are made up by a Morse code motif built on the letters KA.

ExodusTears

This piece contains references to the Bob Marley song “Exodus” (the underlying groove for the first section of the piece), and the Police song “Driven to Tears” (melody played in Clavinet during the first section of the piece, and the underlying groove for the second section after the tempo change).

IntervalMelody

An improvised piece based on the use of the intervalMelody module in ImproSculpt. The rhythmic motif during the last part of the piece was build on Morse code for the letters NO, proceeding attacca to the next song, “Big Block” by Jeff Beck (fades out after a few seconds)

ExodusTears in Molde

This is the equivalent part of Kanon as “ExodusTears” but this excerpt was taken from the second performance of Kanon, in Molde 2007. Only the first three and a half minutes of the piece is included in the excerpt. In the context of the artistic evaluation, the interplay between Eick and me may be noted. The single voice partikkel module of ImproSculpt is used to do granular processing, using the trumpet solo as live sampled source.

IntervalMelody i Molde

This is the equivalent part of Kanon as IntervalMelody, but this excerpt was taken from the performance in Molde 2007.

Feedback instrument performances

May 2007:

Øyvind Brandtsegg: ImproSculpt

Ingrid Lode: vocal, physical manipulation of aluminium tubes

October 2007:

Øyvind Brandtsegg: ImproSculpt

Mathias Eick: Trumpet, physical manipulation of aluminium tubes

Feedback_LodeBrandtsegg_mai_2007

The piece was performed on May 8th 2007, for the opening of the Archive Centre at the Dora bunker in Trondheim. A vague sketch of the overall form and mood was agreed upon by the performers, in all other respects the piece was improvised.

Three aluminium tubes were used as resonators, each with a microphone sending audio to the ImproSculpt audio inputs. The only sounds used were the feedback instrument with the aluminium tubes, vocals, and granular synthesis (partikkel opcode) using live sampled segments. The characteristics of the resonators were explored by hitting and scratching of the aluminium tubes, singing into the tubes, and dragging the tubes across the concrete floor.

The performance exhibits an interesting aspect of improvisational interplay when using ImproSculpt’s feedback instrument. Lode manipulated the aluminium tubes by singing into them or covering the tube’s end and thereby changing the resonating characteristics, while I modified the feedback instrument parameters in ImproSculpt. When viewing the aluminium tube resonators and the electronic feedback instrument as a whole, this creates a musical situation where two performers simultaneously play the same instrument. A humorous analogy would be playing four-handed piano on a piano with only one key. The action of one performer directly affects what the other performer can do on his or her instrument, e.g. modifying the feedback instrument parameters in ImproSculpt will change the way the aluminium tubes respond to singing. This created an interplay situation where each performer had to be more than normally attentive of the actions done by the other.

Feedback_EickBrandtsegg_october2007

The piece was performed on October the 17th 2007, as an opening act for the show “Technoport Awards” in Olavshallen, Trondheim. Part of the performance was also dancer Ingeborg Trelstad, choreographed by Susanne Rasmussen.

As with the feedback performance done in May 2007, this also shows the complex interplay between aluminium resonators, feedback system and the two performers. Some distortion can be heard on the trumpet, this was not intentional but due to a technical error with the recording.

The partikkel module may be heard clearly, starting at 2:00 and evolving into harmonic interplay between trumpet and partikkel generator.

“Irrganger” installation (Labyrinths)

Øyvind Brandtsegg: Composition, Programming

Viel Bjerkested Andersen: Sculpture

This installation was made for a new building at Steinkjer videregående skole (Steinkjer College). It consists of three sculptures and two “audio walkways” (glass corridors). The sculptures are made of long aluminium tubes, in configurations representing patterns of (people’s) movement within the building. The idea was that one’s patterns of movement within the building throughout several years of attending the school is conserved as a personal memory. In a similar manner to people’s movement, audio flows through the sculptures in a feedback loop. This is facilitated by mounting a speaker and a microphone in each sculpture, creating audio feedback. The feedback loop is controlled by ImproSculpt’s feedback instrument, slowly suppressing individual feedback frequencies as soon they appear in the audio spectrum. The result is a continuously changing timbre evolving around the resonant frequencies that are part of the acoustics of the sculptures. In some ways, this technique is similar to that used by Agostino Di Scipio’s “Modes of Interference”. The audio signals from the feedback loops are live sampled and used as source material for audio particles (generated by ImproSculpt’s cloudPlayer) presented in the audio walkways. The two audio walkways are each made up of four loudspeakers mounted as to transmit audio vibrations to glass panes in corridors connecting the new building to adjoining older buildings. The audio particles represent a fragmented and transposed version of the audio system present in the sculptures.

As the installation contains 7 different audio output channels distributed over a large area, it is not straightforward to present a true recording of the installation in this context. The following audio examples are taken from separate parts of the installation. All examples were recorded simultaneously, with a recording duration of 5 minutes to show how the installation may change over time. As the feedback eliminator algorithm suppresses different harmonics in the audio signal, the sound will change over time. This is more apparent in sculpture 3 (see below) than in sculpture 1. During the recording, I also walked around to different parts of the installation, tapping on the sculptures and shouting into them. This was done to give an example of how the sculptures will react to sounds from the audience (the users of the building, e.g. teachers and pupils at the school).

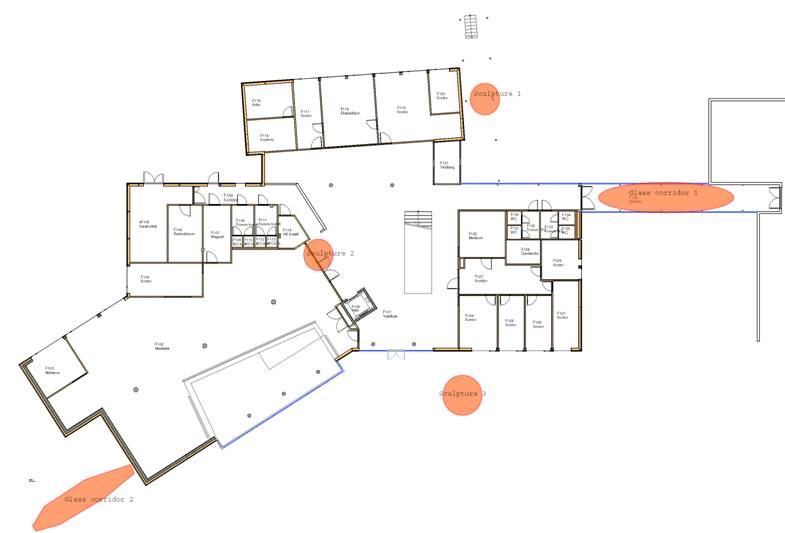

Drawing of the building with installations indicated in red

.

A chart of the signal flow for the Irrganger installation

Sculpture 1 (at main entrance):

Mono recording, as this sculpture only has 1 speaker and 1 microphone. Tapping and shouting can be heard from 1:55 to 2:08. A low level audio feed signal from sculpture 3 can be heard from 4:15, quite clearly (voice) at 4:30. The signal feed from sculpture 3 to sculpture 1 was routed in software, as a source of variation for sculpture 1, as this sculpture otherwise appears to have a pretty static harmonic behaviour.

Sculpture 1, at main entrance

Sculpture 2 (in foyer), not recorded:

The speaker mounted in this sculpture has been disabled, as the normal use of the foyer room was disturbed by the sound. However, it contains a microphone used for capturing the sound of people working in the foyer. These sounds are recorded and used as source material for the audio particle generators active in the glass corridors, in the same manner as the sound from sculpture 1 and 3 is also used as source material for audio particles.

Sculpure 2, in foyer

Sculpture 3 (at the back of the building):

Stereo recording, this sculpture has 2 speakers and 2 microphones. As such, it contains 2 feedback loops that interact acoustically. Each microphone is hard panned to the left and right channels of the audio file. Tapping and shouting can be heard from 4:15 to 5:00.

Sculpture 3, at the back of the building

Glass corridors:

The glass corridors (audio walkways) consist of a 4 speaker system. However, for the case of practical playback, the recordings were made in stereo. This means that the 4 channel audio signal was recorded to two stereo files, channels 1 and 2 being recorded to one file and channels 3 and 4 being recorded to the other one.

The tapping being done on sculpture 3 can be heard as audio particles from 4:17 to 5:00. Particles of a voice can also be heard at 0:48, but the recording of this voice at the sculpture inputs is not present on any of the audio examples.

One of the glass corridors while mounting equipment. One glass speaker (exciter) indicated by the red circle.

Aleph

Øyvind Brandtsegg: ImproSculpt

Kenneth Kapstad: Drums

Stian Westerhus: Guitar, electronics

This ensemble was started in 2006, at first as a duo (Brandtsegg/Westerhus). As part of the research project, it provides a platform for exploring musical ideas related to the use of ImproSculpt and the improvisational practices inspired by using ImproSculpt.

Intro_to_Kapstad_on_dokk

This piece was composed by Kapstad and Westerhus, consisting of rhythmical riffs for the two. My role is free, the general guidelines from the two other musicians were along the lines “think of John Zorn and see what you can come up with”. It was performed in April 2007, as part of Kapstad’s work during his master’s degree in Improvisation (NTNU).

Live_at_Steinkjer_16062007 part1

Part 1 of the live recording, full description to be found under part 2 of same.

Live_at_Steinkjer_16062007 part2

This is the full length recording of a performance done in June 2007. The performance may be termed free improvisation. Some composed sketches were prepared by me, to be used if needed. These were (almost) not used during the performance, as we simply forgot about them while performing. An exception is the theme played in Vibraphone at 4:00 in part 2, based on one of my 12-tone composition named “Sjakktrekk” (“Chess move”). The 12-tone row of the composition is used as the sole material for improvisation (on Marimba Lumina, Vibes timbre) until 13:00.

The recording shows examples of using different modules in ImproSculpt. The following states some sections where clear examples of the different modules can be heard. The feedback instrument can be heard at 0:00 to 5:00 in part 1 and at 0:00 to 6:00 in part 2, the intervalMelody module at 13:30 to 17:20 in part 2, the single voice partikkel generator can be heard at 17:30 to 30:00 in part 1 and at 24:30 to 34:10 (end) in part 2. The Marimba Lumina, the pedal board and the Lemur were used as controllers.

The Concert, December 1st 2007

Øyvind Brandtsegg: ImproSculpt, Marimba Lumina

Kenneth Kapstad: Drums

Mattis Kleppen: Bass

Ingrid Lode: Vocals

Stian Westerhus: Guitar, electronics

Live at Dokkhuset December 1st 2007

This concert represents the final public presentation of the artistic work in the research project. A selection of short composed themes was used to build a framework for improvisation. The following list indicates sections where different improvisational and compositional methods were utilized. For composed themes, the times indicate the point where the theme is played as notated, whereas improvisation on the theme usually happens both before and after the specified time. The list contains links to pdf files with traditional notation of the composed themes.

0:00:00 Feedback improvisation

- using aluminium tubes as resonator with ImproSculpt’s feedback instrument.

- Also uses partikkel manipulations of live sampled vocals.

0:07:50 RandPlay improvisation

- using live sampled vocals as source material

0:17:10 “Sjakktrekk”

pdf (theme), pdf (12 tone series)

- using serial improvisation

0:26:35 “5-bass”

0:31:00 Rplay improvisation

- using live sampled chords (drums/guitar/bass) as source material

0:37:08 “CaChords”

- using pointilistic improvisation

0:43:00 (“4-3” - bass riff from first 8 bars)

0:46:30 “4-3” - full theme

- using interval vector harmonizer in improvisation from ca 48:10

0:52:50 Interval Melody improvisation

1:03:40 (“RiffDur” – bass riff)

1:04:55 “RiffDur” – full theme

1:07:45 End